[vc_row][vc_column][vc_column_text]

BERT NLP Explained: The Latest NLP Model

You’ve probably encountered this term several times by now, but what is the acronym BERT short for? It stands for Bidirectional Encoder Representations from Transformers. In this article, we’ll explain what BERT is, how it is affecting the world on neuro-linguistic programming, and how it can ultimately impact customer experience analytics.

BERT was introduced in a paper published by a group of researchers at Google AI Language. It’s defined as a “groundbreaking” technique for natural language processing (NLP), because it’s the first-ever bidirectional and completely unsupervised technique for language representation. It’s open-sourced and can be easily used by anyone with machine learning experience.

A Transformer is a popular attention mechanism used to learn contextual relations between words in a text using artificial intelligence.

This model is described as “bidirectional.” In contrast with directional models—which can read a text from either left-to-right or right-to-left—BERT can read a text (or a sequence of words) all at once, with no specific direction. Thanks to its bidirectionality, this model can understand the meaning of each word based on context both to the right and to the left of the word; this represents a clear advantage in the field of context learning.

Google’s BERT is based on two main techniques: Mask Language Model (MLM) and Next Sentence Prediction (NSP).

Here’s how it works: Say you want to feed a text into BERT. First, in order to train itself, the model replaces 15% of the words in each word sequence with a [MASK] token; then, it tries to predict the original tokens, based on all the other words in the sequence, which are not masked; in other words, based on the context.

For the second technique, the model analyzes pairs of sentences and learns to predict if they’re subsequential or not. For example, the sentences (1) “She’s a star in her field; (2) Everyone wants to work with her, are subsequential, as the second sentence follows the first. Instead, (1) She’s a star in her field; (2) It’s raining tomorrow, are clearly not tied to each other.

How BERT Will Change NLP

BERT is already making significant waves in the world of natural language processing (NLP). Several developments have come out recently, from Facebook’s RoBERTa (which does not feature Next Sentence Prediction) to ALBERT (a lighter version of the model), which was built by Google Research with the Toyota Technological Institute.

The new model features an important improvement when it comes to context understanding. Especially with certain texts which are “context-heavy,” for which context understanding is so important for analytics purposes, BERT is a great solution.

Just think of all those words that can have multiple meanings depending on the context (homonyms). Say we have two sentences like:

- They’re planning to go on a second date.

- They’re trying to find a good date for the conference.

In the first sentence, the word “date” means romantic meeting; in the second sentence, it refers to a day/month/year. We know that because of the context.

Another example:

- She’s a star in her field.

- It was so cloudy, we couldn’t find one single star in the sky.

In the first sentence, the word “star” refers to a successful person; in the second one, it’s an object in space. Again, we know that because of the context.

Because BERT practices to predict missing words in the text, and because it analyzes every sentence with no specific direction, it does a better job at understanding the meaning of homonyms than previous NLP methodologies, such as embedding methods.

It’s widely reported that this new model is slower than its predecessors. However, most experts in the field agree that it outperforms the other models, because of its stronger awareness of the context of each text it analyzes.

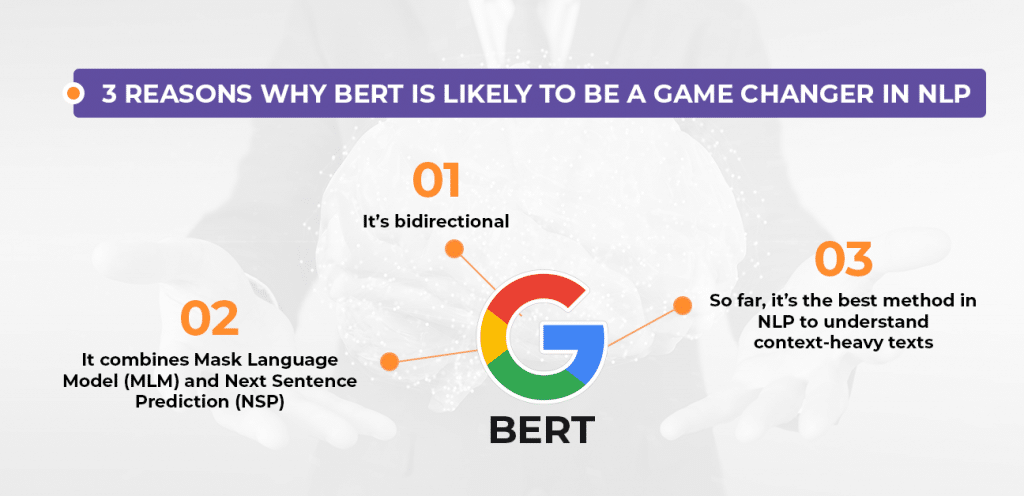

To summarize, these are the 3 reasons why BERT is likely to be a game changer in NLP:

- It’s bidirectional

- It combines Mask Language Model (MLM) and Next Sentence Prediction (NSP).

- So far, it’s the best method in NLP to understand context-heavy texts

Will BERT Impact CX Analytics?

here are some common applications of BERT already in use that can be found in Google Search and Gmail. For example, Google Search Autocomplete, as well as Suggested Replies and Smart Compose in Gmail. (Ever wondered how Google can formulate suggested replies based on an email you’ve received? Yeah, BERT might have helped a bit, there.)

However, it’s important to note that BERT is a work in progress, and so are its applications. While there are not many tasks in Customer Experience Analytics being performed with BERT yet, it’s easy to speculate how impactful this new methodology can be in this growing field.

One of the top methods used in CX Analytics is Sentiment Analysis, which is the automated process to interpret whether the sentiment behind a text is positive, negative, or neutral.

In order to perform Sentiment Analysis, CX Analytics companies like Revuze use text analytics, the automated process to analyze a piece of writing. Text analytics is based on different NLP (natural language processing) techniques, and BERT is likely to become one of the most useful techniques for CX Analytics tasks in the near future.

In addition, CX Analytics relies on text analytics in a lot of different fields, which are often jargon-based and context-specific depending on the industry. Because BERT’s strength lies in context understanding, there’s a lot that we can benefit from here.

It’s safe to say that you’ll increasingly hear this four-letter acronym more and more often over the next year!

About Revuze

Revuze’s AI-powered solution enables brands to quickly understand their product and customer satisfaction issues, and to automatically score and rank their brand’s performance relative to its competitors and to the market.

If you want to learn more about Customer Analytics and Sentiment Analysis, check out our Blog.

[/vc_column_text][/vc_column][/vc_row][vc_row][vc_column][/vc_column][/vc_row]

All

Articles

All

Articles Email

Analytics

Email

Analytics

Agencies

Insights

Agencies

Insights